Introduction

- Name

Nathan Bijnens

- Address

-

9042 Ghent

Belgium - nathan@nathan.gs

- Telephone

- +32 486 15 88 29

- Website

- nathan.gs

- GitHub

- github.com/nathan-gs

- @nathan_gs

- linkedin.com/in/nbijnens

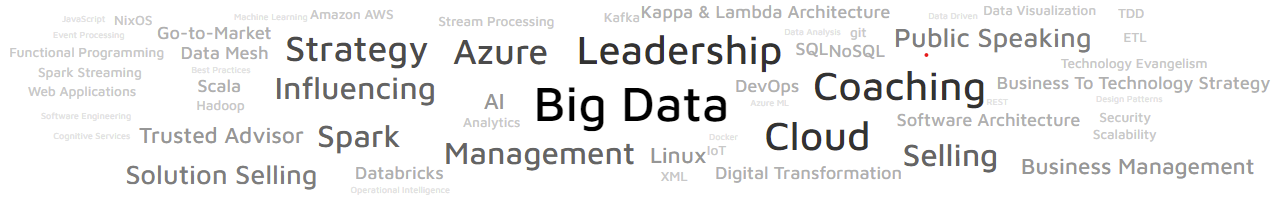

I am Nathan Bijnens, a Sr Solution Architect Manager, Data & AI with an extensive background as software developer and Solution Architect, with a passion for Business, Cloud and Data. I guide my team, coach and mentor my colleagues and set-out the strategy and go-to-market towards our strategic, commercial and public sector customers on data & cloud. Responsible for nn M€ revenue and double digit growth on Analytics, Apps+Data, AI/ML. I drive customers initiatives, leveraging Microsoft AI & Analytics on Azure to solve the biggest and most complex data challenges faced by Microsoft’s enterprise customers.

In a previous role I was the Lead Cloud Solution Architect for the European Union Institutions and NATO at Microsoft helping the European Union and NATO become a Data Driven Government.

With a successful track record of building innovative systems in highly political and complex environments, I not only help on envisioning what data can do for business, I analyze, give recommendations, design architectures and inspire teams. I build on my background and experience with data analytics and building Big Data systems, especially real-time Big Data and messaging-based systems.

I enjoy working with clients and partners, from giving advice, talking about the Business and Technological value of Big Data and AI, to imagining solutions. Working in an entrepreneurial, data driven, innovative environment gives me energy.

I am a passionate speaker and evangelist, on AI, Big Data, IoT and Cloud.